Master Python, ML & Data Science

Clear, professional tutorials designed for students and professionals. Learn with hands-on code examples, comprehensive guides, and a supportive community.

Why Learn with Data Logos?

Everything you need to excel in data science

Probability and Statistics for Deep Learning

By the end of this note, you will understand random variables, expectation, variance, and key distributions, apply Bayes’ theorem and MLE in ML/DL settings, explain the bias–variance tradeoff and its link to overfitting and underfitting, and use core information theory concepts like entropy and KL divergence in loss functions and model evaluation.

Deep Learning - A Comprehensive Guide

Deep Learning is a subset of Machine Learning that uses multi-layered neural networks to automatically learn complex patterns from data such as images, text, audio, and video.

Backpropagation

Backpropagation (or backprop) is the algorithm used to compute the gradient of the loss with respect to every parameter in a neural network. It does this by applying the chain rule layer by layer, starting from the loss at the output and moving backward through the network.

Optimizers

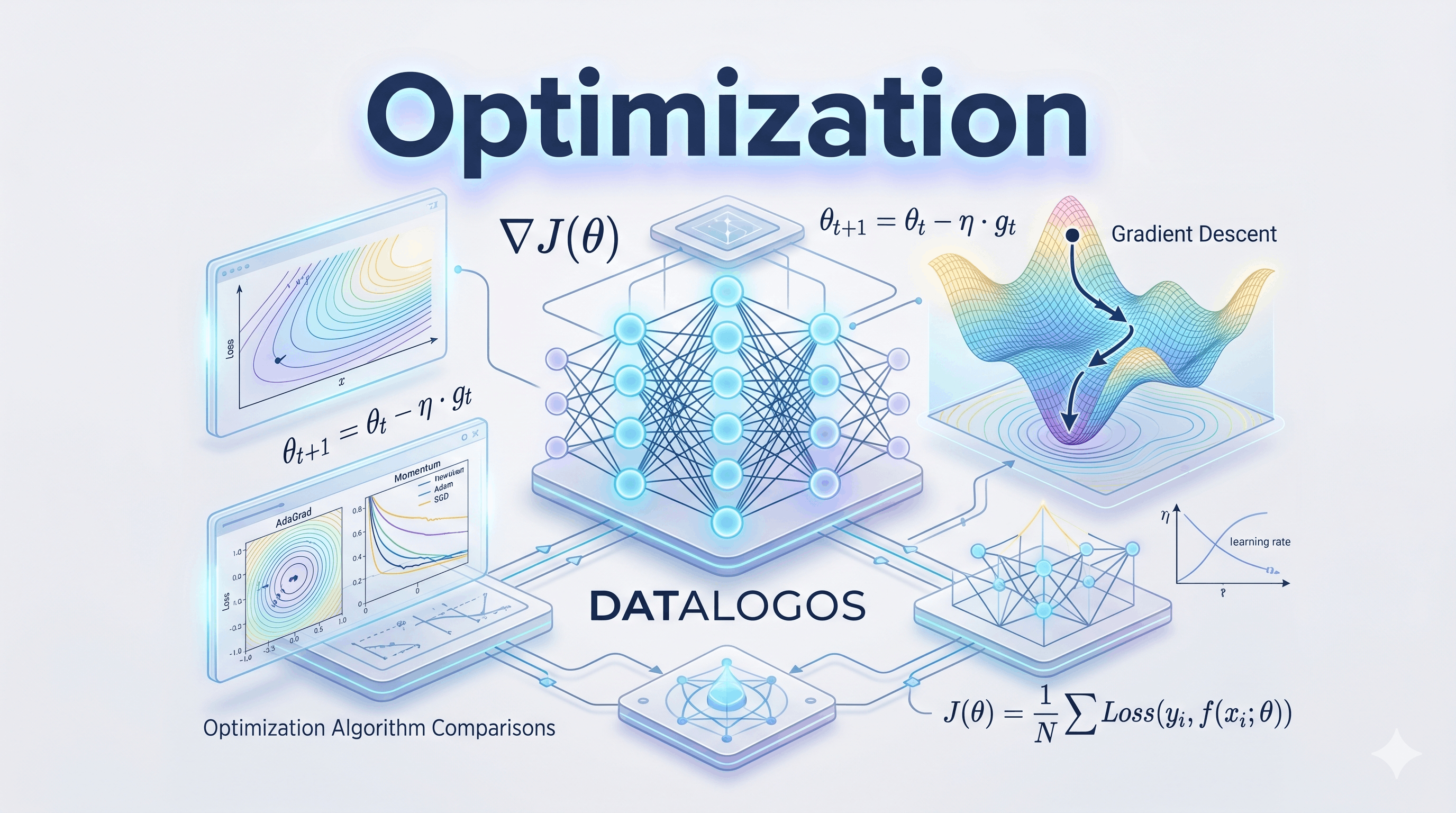

An optimizer (or optimization algorithm) is the component that takes the gradients of the loss with respect to the parameters (computed by backpropagation) and produces the actual parameter updates.

Loss Functions

A loss function (or cost function) is a scalar function that measures how wrong the model’s predictions are compared to the true targets. It takes predictions and targets as inputs and outputs a single number: the higher the loss, the worse the model is doing.

Activation Functions

Think of a volume knob that doesn’t just multiply the signal linearly: it might squash loud sounds (saturation), cut off negative values (ReLU), or smoothly compress everything into a fixed range (sigmoid).

Artificial Neuron And Perceptron

An artificial neuron is the smallest computational unit in a neural network. It takes several numeric inputs, multiplies each by a weight, adds a bias, and passes the result through an activation function to produce one output.

Optimization Fundamentals

Optimization in machine learning and deep learning is the process of finding parameter values (e.g., weights) that minimize a loss function

Learn by Doing

Copy, paste, and run real code examples

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LinearRegression

# Load and prepare data

df = pd.read_csv('data.csv')

X = df[['feature1', 'feature2', 'feature3']]

y = df['target']

# Split data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)

# Create and train the model

model = LinearRegression()

model.fit(X_train, y_train)

# Make predictions

predictions = model.predict(X_test)

# Evaluate model performance

score = model.score(X_test, y_test)

print(f"Model R² Score: {score:.4f}")Latest Articles

In-depth tutorials and guides

Ready to Start Your Data Science Journey?

Join thousands of students and professionals learning Python, ML, and Data Science with Data Logos.